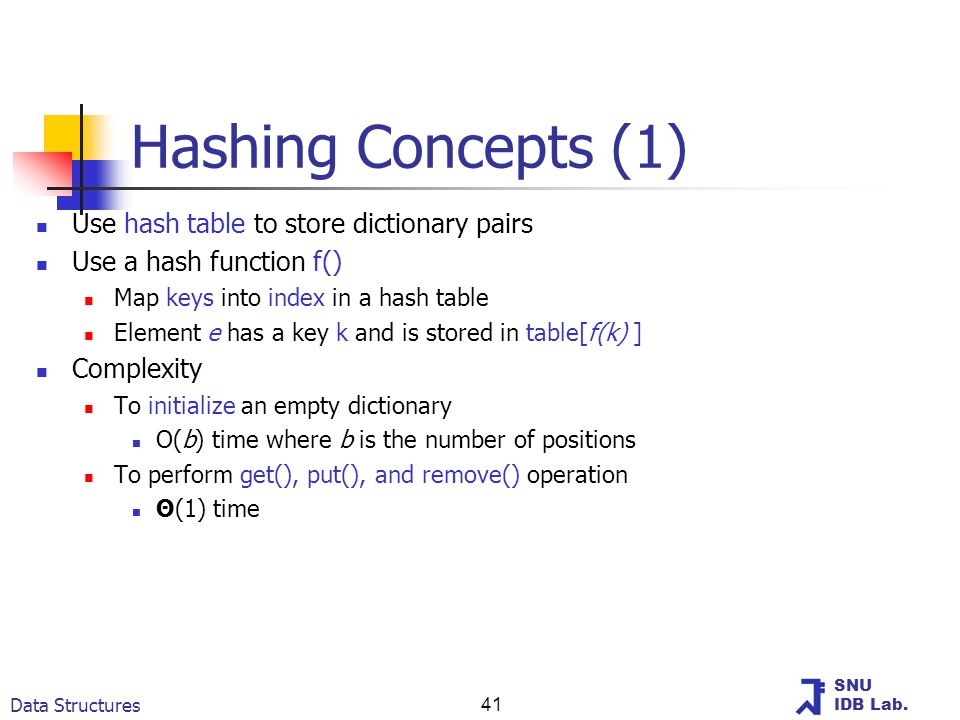

Hashing In previous sections we were able to make improvements in our search algorithms by taking advantage of information about where items are stored in the collection with respect to one another. For example, by knowing that a list was ordered, we could search in logarithmic time using a binary search. In this section we will attempt to go one step further by building a data structure that can be searched in (O(1) ) time. This concept is referred to as hashing.

In order to do this, we will need to know even more about where the items might be when we go to look for them in the collection. If every item is where it should be, then the search can use a single comparison to discover the presence of an item.

We will see, however, that this is typically not the case. A hash table is a collection of items which are stored in such a way as to make it easy to find them later. Each position of the hash table, often called a slot, can hold an item and is named by an integer value starting at 0. For example, we will have a slot named 0, a slot named 1, a slot named 2, and so on. Initially, the hash table contains no items so every slot is empty.

We can implement a hash table by using a list with each element initialized to the special Python value None. Shows a hash table of size (m=11 ). In other words, there are m slots in the table, named 0 through 10. Figure 5: Hash Table with Six Items Now when we want to search for an item, we simply use the hash function to compute the slot name for the item and then check the hash table to see if it is present. This searching operation is (O(1) ), since a constant amount of time is required to compute the hash value and then index the hash table at that location.

Hashing is an important Data Structure which is designed to use a special. Called the Hash function which is used to map a given value with a particular key for faster. Print a Binary Tree in Vertical Order| Set 2 (Hashmap based Method) Find. Implementing own Hash Table with Open Addressing Linear Probing in C++.

If everything is where it should be, we have found a constant time search algorithm. You can probably already see that this technique is going to work only if each item maps to a unique location in the hash table. For example, if the item 44 had been the next item in our collection, it would have a hash value of 0 ( (44% 11 0 )). Since 77 also had a hash value of 0, we would have a problem. According to the hash function, two or more items would need to be in the same slot. This is referred to as a collision (it may also be called a “clash”). Clearly, collisions create a problem for the hashing technique.

We will discuss them in detail later. Figure 7: Hashing a String Using Ordinal Values with Weighting You may be able to think of a number of additional ways to compute hash values for items in a collection.

The important thing to remember is that the hash function has to be efficient so that it does not become the dominant part of the storage and search process. If the hash function is too complex, then it becomes more work to compute the slot name than it would be to simply do a basic sequential or binary search as described earlier. This would quickly defeat the purpose of hashing. Collision Resolution We now return to the problem of collisions. When two items hash to the same slot, we must have a systematic method for placing the second item in the hash table.

This process is called collision resolution. As we stated earlier, if the hash function is perfect, collisions will never occur.

However, since this is often not possible, collision resolution becomes a very important part of hashing. One method for resolving collisions looks into the hash table and tries to find another open slot to hold the item that caused the collision. A simple way to do this is to start at the original hash value position and then move in a sequential manner through the slots until we encounter the first slot that is empty. Note that we may need to go back to the first slot (circularly) to cover the entire hash table. This collision resolution process is referred to as open addressing in that it tries to find the next open slot or address in the hash table.

By systematically visiting each slot one at a time, we are performing an open addressing technique called linear probing. Shows an extended set of integer items under the simple remainder method hash function (54,26,93,17,77,31,44,55,20). Above shows the hash values for the original items. Shows the original contents. When we attempt to place 44 into slot 0, a collision occurs.

Under linear probing, we look sequentially, slot by slot, until we find an open position. In this case, we find slot 1. Again, 55 should go in slot 0 but must be placed in slot 2 since it is the next open position. The final value of 20 hashes to slot 9.

Since slot 9 is full, we begin to do linear probing. We visit slots 10, 0, 1, and 2, and finally find an empty slot at position 3. Figure 8: Collision Resolution with Linear Probing Once we have built a hash table using open addressing and linear probing, it is essential that we utilize the same methods to search for items. Assume we want to look up the item 93. When we compute the hash value, we get 5. Looking in slot 5 reveals 93, and we can return True. What if we are looking for 20?

Now the hash value is 9, and slot 9 is currently holding 31. We cannot simply return False since we know that there could have been collisions. We are now forced to do a sequential search, starting at position 10, looking until either we find the item 20 or we find an empty slot. A disadvantage to linear probing is the tendency for clustering; items become clustered in the table. This means that if many collisions occur at the same hash value, a number of surrounding slots will be filled by the linear probing resolution.

This will have an impact on other items that are being inserted, as we saw when we tried to add the item 20 above. A cluster of values hashing to 0 had to be skipped to finally find an open position. This cluster is shown in. Figure 9: A Cluster of Items for Slot 0 One way to deal with clustering is to extend the linear probing technique so that instead of looking sequentially for the next open slot, we skip slots, thereby more evenly distributing the items that have caused collisions. This will potentially reduce the clustering that occurs. Shows the items when collision resolution is done with a “plus 3” probe. This means that once a collision occurs, we will look at every third slot until we find one that is empty.

Figure 10: Collision Resolution Using “Plus 3” The general name for this process of looking for another slot after a collision is rehashing. With simple linear probing, the rehash function is (newhashvalue = rehash(oldhashvalue) ) where (rehash(pos) = (pos + 1)% sizeoftable ). The “plus 3” rehash can be defined as (rehash(pos) = (pos+3)% sizeoftable ). In general, (rehash(pos) = (pos + skip)% sizeoftable ).

It is important to note that the size of the “skip” must be such that all the slots in the table will eventually be visited. Otherwise, part of the table will be unused. To ensure this, it is often suggested that the table size be a prime number. This is the reason we have been using 11 in our examples. A variation of the linear probing idea is called quadratic probing.

Instead of using a constant “skip” value, we use a rehash function that increments the hash value by 1, 3, 5, 7, 9, and so on. This means that if the first hash value is h, the successive values are (h+1 ), (h+4 ), (h+9 ), (h+16 ), and so on. In other words, quadratic probing uses a skip consisting of successive perfect squares.

Shows our example values after they are placed using this technique. Figure 11: Collision Resolution with Quadratic Probing An alternative method for handling the collision problem is to allow each slot to hold a reference to a collection (or chain) of items. Chaining allows many items to exist at the same location in the hash table.

When collisions happen, the item is still placed in the proper slot of the hash table. As more and more items hash to the same location, the difficulty of searching for the item in the collection increases.

Shows the items as they are added to a hash table that uses chaining to resolve collisions. Self Check Q-38: In a hash table of size 13 which index positions would the following two keys map to?

27, 130. (A) 1, 10. Be careful to use modulo not integer division. (B) 13, 0. Don't divide by two, use the modulo operator. (C) 1, 0.

27% 13 1 and 130% 13 0. (D) 2, 3. Use the modulo operator Q-39: Suppose you are given the following set of keys to insert into a hash table that holds exactly 11 values: 113, 117, 97, 100, 114, 108, 116, 105, 99 Which of the following best demonstrates the contents of the has table after all the keys have been inserted using linear probing?. (A) 100, , , 113, 114, 105, 116, 117, 97, 108, 99.

It looks like you may have been doing modulo 2 arithmentic. You need to use the hash table size as the modulo value. (B) 99, 100, , 113, 114, , 116, 117, 105, 97, 108. Using modulo 11 arithmetic and linear probing gives these values. (C) 100, 113, 117, 97, 14, 108, 116, 105, 99,.

It looks like you are using modulo 10 arithmetic, use the table size. (D) 117, 114, 108, 116, 105, 99, , , 97, 100, 113. Be careful to use modulo not integer division. Implementing the Map Abstract Data Type One of the most useful Python collections is the dictionary. Recall that a dictionary is an associative data type where you can store key–data pairs. The key is used to look up the associated data value.

We often refer to this idea as a map. The map abstract data type is defined as follows. The structure is an unordered collection of associations between a key and a data value. The keys in a map are all unique so that there is a one-to-one relationship between a key and a value. The operations are given below.

Map Create a new, empty map. It returns an empty map collection. put(key,val) Add a new key-value pair to the map. If the key is already in the map then replace the old value with the new value. get(key) Given a key, return the value stored in the map or None otherwise. del Delete the key-value pair from the map using a statement of the form del mapkey.

len Return the number of key-value pairs stored in the map. in Return True for a statement of the form key in map, if the given key is in the map, False otherwise. One of the great benefits of a dictionary is the fact that given a key, we can look up the associated data value very quickly. In order to provide this fast look up capability, we need an implementation that supports an efficient search. We could use a list with sequential or binary search but it would be even better to use a hash table as described above since looking up an item in a hash table can approach (O(1) ) performance.

In we use two lists to create a HashTable class that implements the Map abstract data type. One list, called slots, will hold the key items and a parallel list, called data, will hold the data values. When we look up a key, the corresponding position in the data list will hold the associated data value. We will treat the key list as a hash table using the ideas presented earlier. Note that the initial size for the hash table has been chosen to be 11.

Although this is arbitrary, it is important that the size be a prime number so that the collision resolution algorithm can be as efficient as possible. Class HashTable: def init ( self ): self.

Size = 11 self. Slots = None. self. Data = None.

self. Size hashfunction implements the simple remainder method.

The collision resolution technique is linear probing with a “plus 1” rehash function. The put function (see ) assumes that there will eventually be an empty slot unless the key is already present in the self.slots. It computes the original hash value and if that slot is not empty, iterates the rehash function until an empty slot occurs. If a nonempty slot already contains the key, the old data value is replaced with the new data value.

Dealing with the situation where there are no empty slots left is an exercise. Def put ( self, key, data ): hashvalue = self.

Hashfunction ( key, len ( self. Slots )) if self. Slots hashvalue None: self.

Slots hashvalue = key self. Data hashvalue = data else: if self. Slots hashvalue key: self.

Data hashvalue = data #replace else: nextslot = self. Rehash ( hashvalue, len ( self. Slots )) while self. Slots nextslot != None and self.

Slots nextslot != key: nextslot = self. Rehash ( nextslot, len ( self. Slots )) if self. Slots nextslot None: self. Slots nextslot = key self. Data nextslot = data else: self.

Data nextslot = data #replace def hashfunction ( self, key, size ): return key% size def rehash ( self, oldhash, size ): return ( oldhash + 1 )% size Likewise, the get function (see ) begins by computing the initial hash value. If the value is not in the initial slot, rehash is used to locate the next possible position. Notice that line 15 guarantees that the search will terminate by checking to make sure that we have not returned to the initial slot. If that happens, we have exhausted all possible slots and the item must not be present. The final methods of the HashTable class provide additional dictionary functionality. We overload the getitem and setitem methods to allow access using````. This means that once a HashTable has been created, the familiar index operator will be available.

We leave the remaining methods as exercises. Def get ( self, key ): startslot = self. Hashfunction ( key, len ( self. Slots )) data = None stop = False found = False position = startslot while self. Slots position != None and not found and not stop: if self. Slots position key: found = True data = self.

Data position else: position = self. Rehash ( position, len ( self. Slots )) if position startslot: stop = True return data def getitem ( self, key ): return self. Get ( key ) def setitem ( self, key, data ): self. Put ( key, data ) The following session shows the HashTable class in action. First we will create a hash table and store some items with integer keys and string data values.

RSS Feed

RSS Feed